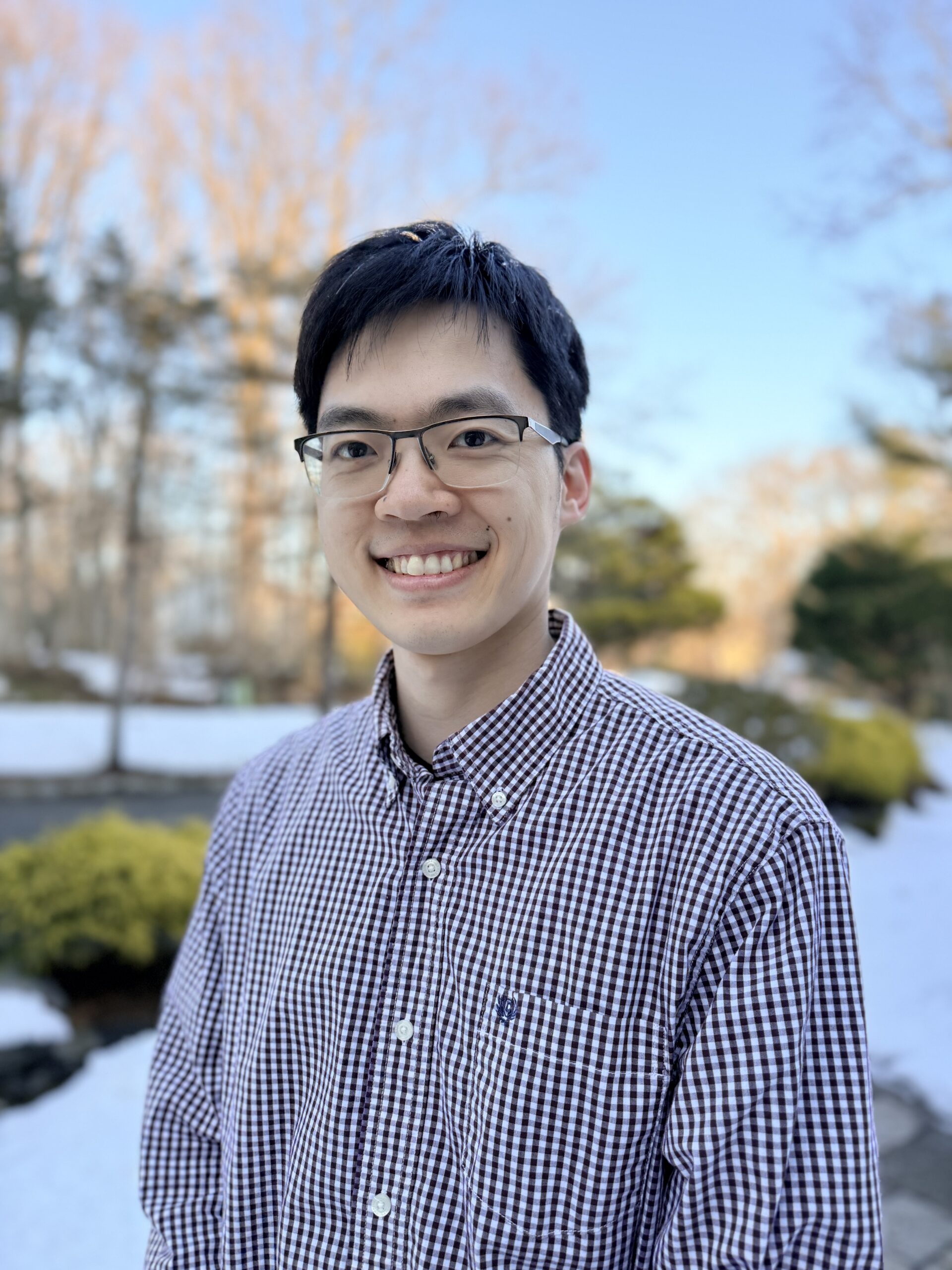

CQE PI Feature – Hengyun (Harry) Zhou

Building Fault-Tolerant Quantum Computer Architectures

Featured in QSEC March newsletter 2026

My research aims to drastically accelerate the timeline towards useful quantum computing by developing new fault-tolerant quantum computer architectures. Quantum computers represent a fundamentally new way of processing information using the laws of quantum mechanics, which itself is already very interesting scientifically. What’s more, the ability to perform such computations also has the potential to impact real-world problems through certain simulation or optimization tasks. What I like about this area is that it involves an interdisciplinary mix between theory and experiment, as well as many areas of physics, electrical engineering, computer science, and more. Many of the algorithmic insights about how to correct errors and perform quantum computations are the bedrock of building quantum computers, but to realize them, we also have to think about how they translate into real experiments.

I have long been drawn to this boundary between theoretical ideas and experimental reality. My first exposure to this kind of research was as an undergrad in Marin Soljačić’s group at MIT, where I worked on an area called topological photonics. There, I had the chance to work on both theoretically proposing new phenomena, and then helping realize them in the lab. Since I really enjoyed that experience, I decided to do my PhD in Mikhail Lukin’s lab at Harvard—a group that intertwines theory and experiments. There, I worked as an experimentalist and collaborated closely with Soonwon (now faculty at MIT physics) and Joonhee (now faculty at Stanford), studying dense spin ensembles as a platform for both quantum sensing and many-body physics. To make progress, we had to develop new control methods to keep the noise in check, and new interpretations of the many-body dynamics we were observing. Our experiments guided our theory efforts towards physically relevant directions, which led us to engineer some previously unrealized physics, such as record-breaking nano-magnetometer sensitivities and novel dynamical phases such as discrete time crystals.

I really enjoyed this research, and found myself more and more drawn toward platforms where one could more systematically control complex quantum behavior, and in particular suppress errors in the system. That naturally led me towards quantum error correction.

Quantum error correction is the idea that quantum information can be stored across many qubits in a redundant way, so that errors can be detected and corrected. This is essential because the error rates needed for large-scale quantum computation are far lower than those achieved by present-day hardware. This seemed out of reach for many years. However, experimental systems are now becoming good enough to test some of the first building blocks of fault-tolerant architectures, as my collaborators and I demonstrated in experiments at Harvard and QuEra Computing, and theoretical advances have significantly reduced the cost of error correction. This creates a unique opportunity where scientific ideas and engineering efforts converge to build new systems.

A major focus of my recent work has been finding ways to reduce the cost of fault tolerance. Quantum error correction is powerful, but it is also expensive: in most approaches, many physical qubits are needed per logical qubit, and reliable operations require repeated rounds of error correction. If quantum computers are to become practical, we need architectures that can reduce both the number of physical qubits required and the time needed to carry out reliable logical operations. Much of my research has aimed to address this challenge, especially through full-stack co-design, where error correction, algorithms, and hardware are designed together to improve efficiency.

For example, in our work on correlated decoding and algorithmic fault tolerance, carried out with Maddie Cain, Chen Zhao, Dolev Bluvstein, Mikhail Lukin, and others, we challenged a common assumption in the field: that every logical operation must generally be accompanied by many rounds of error correction. Motivated in part by the needs of real experiments, we found that when the algorithm and the error-correction procedure are treated as a single combined object, there can be significant opportunities to reduce overhead.

I have also been very excited by our work on quantum error-correcting codes that may greatly reduce the number of physical qubits needed per logical qubit. These codes (often referred to as qLDPC codes) have looked promising for a long time on paper, but were often seen as too difficult to implement in realistic systems. In our work with Qian Xu, Pablo Bonilla, Nishad Maskara and others, we showed that this does not have to be the case. By designing qLDPC-based architectures and logical operations that make use of the flexibility and parallelism of reconfigurable hardware, especially neutral-atom arrays, we found that these ideas can become much more concrete and experimentally relevant than previously appreciated.

Looking ahead, I think this is a remarkably exciting time for quantum computing. For many years, useful quantum computers felt very far away. That sentiment has changed. There is now growing confidence that they not only can be built, but may be built on a rapid timescale—now that we’re operating below threshold, the error rates will likely improve quickly as the exponential suppression kicks in. Many major challenges remain, but that is exactly what makes this moment so interesting. We are entering a phase where architectural choices, error-correction strategies, and hardware-software co-design may play a defining role in shaping the future of the field.

Copyright © 2022-2023 MIT Center for Quantum Engineering – all rights reserved – Accessibility